Integrate LLM Frameworks

Integrate llama.cpp, LiteLLM and custom generation frameworks

The release of BERT in 2018 kicked off the language model revolution. The Transformers architecture succeeded RNNs and LSTMs to become the architecture of choice. Unbelievable progress was made in a number of areas: summarization, translation, text classification, entity classification and more. 2023 tooks things to another level with the rise of large language models (LLMs). Models with billions of parameters showed an amazing ability to generate coherent dialogue.

Looking ahead towards the next wave of innovation, we're due for another shift in model architecture. For example, the Mamba paper previews a possible future after Transformers.

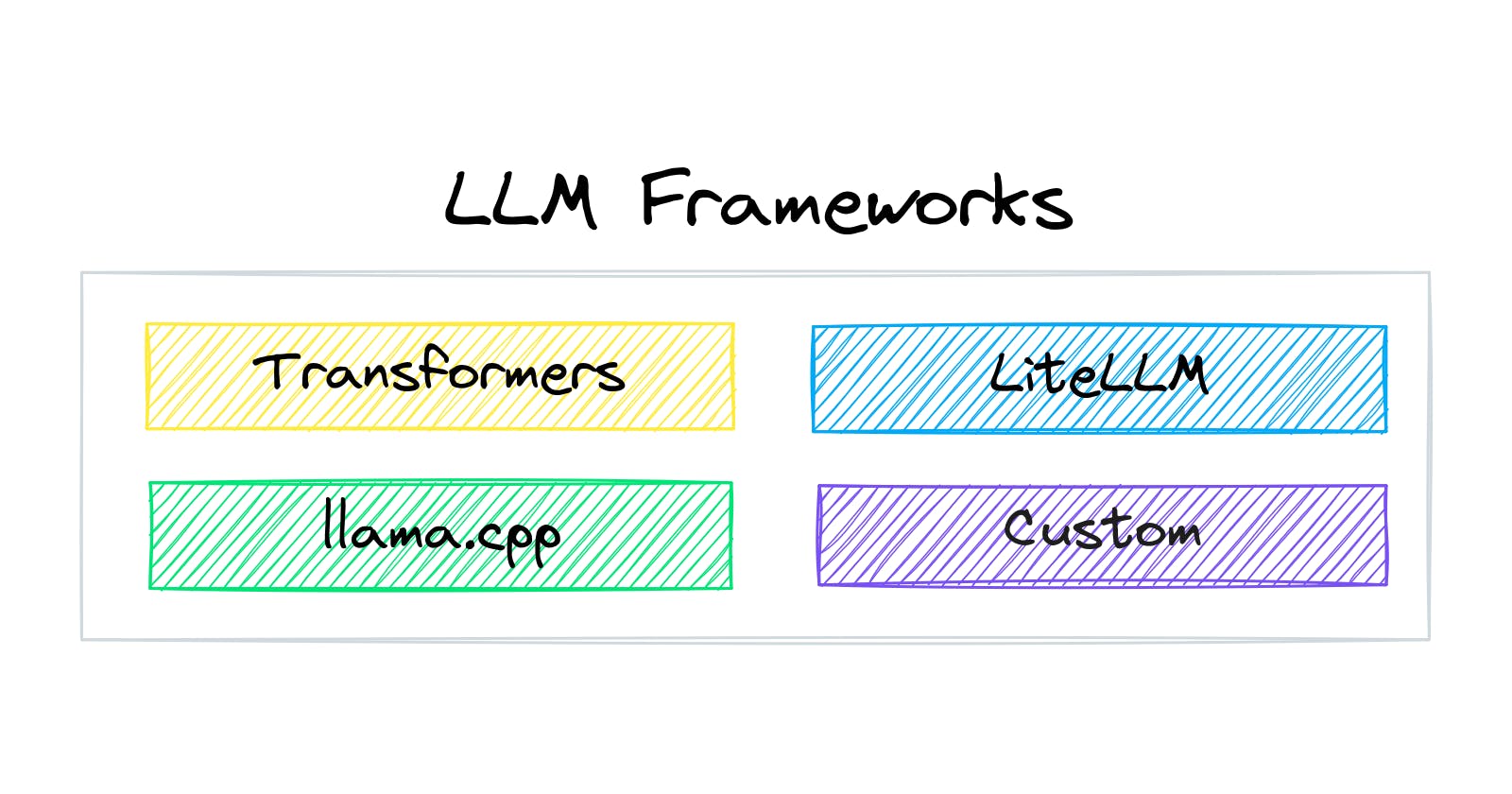

With that in mind, txtai now has the capability to easily integrate additional LLM frameworks. While local models through Hugging Face Transformers continues to be the default choice, these additional LLM frameworks broaden the number of options available.

This article will demonstrate how txtai can integrate with llama.cpp, LiteLLM and custom generation methods. For custom generation, we'll show how to run inference with a Mamba model.

Install dependencies

Install txtai and all dependencies. Since this article is using optional libraries, we need to install the pipeline-llm extras package.

# Install txtai

pip install txtai[pipeline-llm]

llama.cpp

First, we'll demonstrate how to load a model with llama.cpp. This framework is an extremely popular method with those who run local LLMs. It provides a number of innovations in running LLMs on CPUs, especially on Mac's.

The following example shows a retrieval augmented generation (RAG) pipeline with llama.cpp. txtai automatically loads llama.cpp models when working with GGUF files.

from txtai import Embeddings, RAG, LLM

data = [

"US tops 5 million confirmed virus cases",

"Canada's last fully intact ice shelf has suddenly collapsed, forming a Manhattan-sized iceberg",

"Beijing mobilises invasion craft along coast as Taiwan tensions escalate",

"The National Park Service warns against sacrificing slower friends in a bear attack",

"Maine man wins $1M from $25 lottery ticket",

"Make huge profits without work, earn up to $100,000 a day"

]

# Create embeddings

embeddings = Embeddings(content=True, autoid="uuid5")

# Create an index for the list of text

embeddings.index(data)

# Create LLM with llama.cpp - GGUF file is automatically downloaded

llm = LLM("TheBloke/Mistral-7B-OpenOrca-GGUF/mistral-7b-openorca.Q4_K_M.gguf", verbose=True)

template = """<|im_start|>system

You are a friendly assistant. You answer questions from users.<|im_end|>

<|im_start|>user

Find the best matching text in the context for the question. The response should be the text from the context only.

Question:

{question}

Context:

{context}

Text:

<|im_end|>

<|im_start|>assistant

"""

# Create and run RAG instance

rag = RAG(embeddings, llm, output="reference", separator="\n", template=template)

result = rag("Tell me about someone lucky")

print("ANSWER:", result["answer"])

print("REFERENCE:", embeddings.search("select id, text from txtai where id = :id", parameters={"id": result["reference"]}))

ANSWER: Maine man wins $1M from $25 lottery ticket

REFERENCE: [{'id': '37e5fae7-74c2-5f1c-bf69-2962dd7470d1', 'text': 'Maine man wins $1M from $25 lottery ticket'}]

The code above builds an embeddings database, runs a vector search and passes those results to a LLM prompt. As expected, it prints the best answer and reference. The difference is that LLM inference is run through llama.cpp vs transformers.

LiteLLM

LiteLLM is an abstraction framework designed to run with API-based LLMs. At the time of writing this article, LiteLLM supports over 100+ LLMs. See the full list of providers for all the options.

The following example shows a LLM call with the Hugging Face Inference API. This method automatically detects that this is a LiteLLM model string.

# Hugging Face Inference API

llm = LLM("huggingface/roneneldan/TinyStories-1M")

print(llm("The cat and the dog.", maxlength=5))

They are friends.

Given that all these APIs require a paid account, we'll leave it to you to try other API models using your own authentication.

Custom Generation

Last but certainly not least, we'll demonstrate how to add a custom generation framework. For this example, we'll use the recently released mamba-chat model to build a RAG pipeline. You can read more about the model in this GitHub Repository

The following sections install support for Mamba models, define a Mamba Generation instance and run a Mamba-based RAG pipeline.

pip install mamba-ssm

# Link CUDA libraries into environment

export LC_ALL="en_US.UTF-8"

export LD_LIBRARY_PATH="/usr/lib64-nvidia"

export LIBRARY_PATH="/usr/local/cuda/lib64/stubs"

ldconfig /usr/lib64-nvidia

import torch

from transformers import AutoTokenizer

from mamba_ssm.models.mixer_seq_simple import MambaLMHeadModel

from txtai.pipeline import Generation

class MambaGeneration(Generation):

def __init__(self, path, template=None, **kwargs):

super().__init__(path, template, **kwargs)

self.tokenizer = AutoTokenizer.from_pretrained(path)

self.tokenizer.eos_token = "<|endoftext|>"

self.tokenizer.pad_token = self.tokenizer.eos_token

self.model = MambaLMHeadModel.from_pretrained(path, device="cuda", dtype=torch.float16)

def execute(self, texts, maxlength, **kwargs):

results = []

for text in texts:

# Tokenize prompt

tokens = self.tokenizer(text, return_tensors="pt").to("cuda")["input_ids"]

# Run inference

output = self.model.generate(input_ids=tokens, max_length=maxlength, eos_token_id=self.tokenizer.eos_token_id, **kwargs)

# Decode results

output = self.tokenizer.batch_decode(output)

output = output[0].split("<|assistant|>\n")[-1].replace("<|endoftext|>", "").strip()

results.append(output)

return results

llm = LLM("havenhq/mamba-chat", method="__main__.MambaGeneration")

template = """<|system|>You are a friendly assistant. You answer questions from users.</s>

<|user|>

Find the best matching text in the context for the question. The response should be the text from the context only.

Question:

{question}

Context:

{context}

</s>

<|assistant|>

"""

# Create and run RAG instance

rag = RAG(embeddings, llm, output="reference", separator="\n", template=template)

result = rag("Tell me something about about wildlife")

print("ANSWER:", result["answer"])

print("REFERENCE:", embeddings.search("select id, text from txtai where id = :id", parameters={"id": result["reference"]}))

ANSWER: The National Park Service warns against sacrificing slower friends in a bear attack.

REFERENCE: [{'id': '7224f159-658b-5891-b06c-9a96cfa6a54d', 'text': 'The National Park Service warns against sacrificing slower friends in a bear attack'}]

As expected, the best answer and reference is shown.

There is much to learn and validate about Mamba but it's important to note this model is only 2.8B parameters. The Mamba architecture is one to watch moving forward!

Wrapping up

This article demonstrated how to run LLMs through txtai using alternate LLM frameworks. It's an exciting time in AI/NLP/Machine Learning. What new innovations will 2024 bring? Time will tell but txtai is ready to integrate them in!