Up to this point, all the examples have been working with sections of text, which have already been split through some other means. What happens if we're working with documents? First we need to get the text out of these documents, then figure out how to index to best support vector search.

This article shows how documents can have text extracted and split to support vector search and retrieval augmented generation (RAG).

Install dependencies

Install txtai and all dependencies. Since this article is using optional pipelines, we need to install the pipeline extras package.

pip install txtai[pipeline]

# Get test data

wget -N https://github.com/neuml/txtai/releases/download/v6.2.0/tests.tar.gz

tar -xvzf tests.tar.gz

# Install NLTK

import nltk

nltk.download(['punkt', 'punkt_tab'])

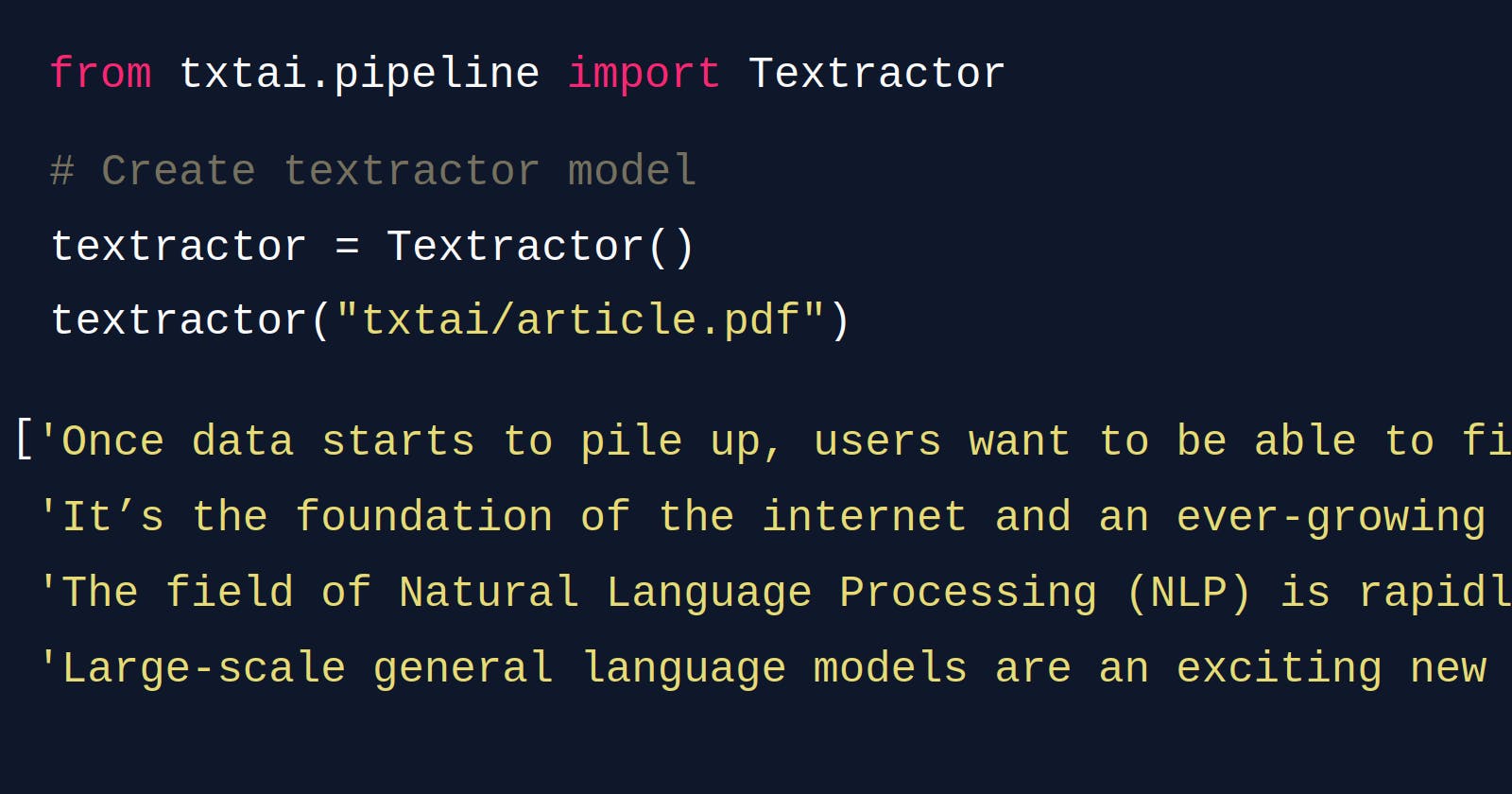

Create a Textractor instance

The Textractor instance is the main entrypoint for extracting text. This method is backed by Apache Tika, a robust text extraction library written in Java. Apache Tika has support for a large number of file formats: PDF, Word, Excel, HTML and others. The Python Tika package automatically installs Tika and starts a local REST API instance used to read extracted data.

Note: This requires Java to be installed locally.

from txtai.pipeline import Textractor

# Create textractor model

textractor = Textractor()

Extract text

The example below shows how to extract text from a file.

textractor("txtai/article.pdf")

Introducing txtai, an AI-powered search engine \nbuilt on Transformers\n\nAdd Natural Language Understanding to any application\n\nSearch is the base of many applications. Once data starts to pile up, users want to be able to find it. It’s \nthe foundation of the internet and an ever-growing challenge that is never solved or done.\n\nThe field of Natural Language Processing (NLP) is rapidly evolving with a number of new \ndevelopments. Large-scale general language models are an exciting new capability allowing us to add \namazing functionality quickly with limited compute and people. Innovation continues with new models\nand advancements coming in at what seems a weekly basis.\n\nThis article introduces txtai, an AI-powered search engine that enables Natural Language \nUnderstanding (NLU) based search in any application.\n\nIntroducing txtai\ntxtai builds an AI-powered index over sections of text. txtai supports building text indices to perform \nsimilarity searches and create extractive question-answering based systems. txtai also has functionality \nfor zero-shot classification. txtai is open source and available on GitHub.\n\ntxtai and/or the concepts behind it has already been used to power the Natural Language Processing \n(NLP) applications listed below:\n\n• paperai — AI-powered literature discovery and review engine for medical/scientific papers\n• tldrstory — AI-powered understanding of headlines and story text\n• neuspo — Fact-driven, real-time sports event and news site\n• codequestion — Ask coding questions directly from the terminal\n\nBuild an Embeddings index\nFor small lists of texts, the method above works. But for larger repositories of documents, it doesn’t \nmake sense to tokenize and convert all embeddings for each query. txtai supports building pre-\ncomputed indices which significantly improves performance.\n\nBuilding on the previous example, the following example runs an index method to build and store the \ntext embeddings. In this case, only the query is converted to an embeddings vector each search.\n\nhttps://github.com/neuml/codequestion\nhttps://neuspo.com/\nhttps://github.com/neuml/tldrstory\nhttps://github.com/neuml/paperai\n - Introducing txtai, an AI-powered search engine built on Transformers\n - Add Natural Language Understanding to any application\n - Introducing txtai\n - Build an Embeddings index

Note that the text from the article was extracted into a single string. Depending on the articles, this may be acceptable. For long articles, often you'll want to split the content into logical sections to build better downstream vectors.

Extract sentences

Sentence extraction uses a model that specializes in sentence detection. This call returns a list of sentences.

textractor = Textractor(sentences=True)

textractor("txtai/article.pdf")

['Introducing txtai, an AI-powered search engine \nbuilt on Transformers\n\nAdd Natural Language Understanding to any application\n\nSearch is the base of many applications.',

'Once data starts to pile up, users want to be able to find it.',

'It’s \nthe foundation of the internet and an ever-growing challenge that is never solved or done.',

'The field of Natural Language Processing (NLP) is rapidly evolving with a number of new \ndevelopments.',

'Large-scale general language models are an exciting new capability allowing us to add \namazing functionality quickly with limited compute and people.',

'Innovation continues with new models\nand advancements coming in at what seems a weekly basis.',

'This article introduces txtai, an AI-powered search engine that enables Natural Language \nUnderstanding (NLU) based search in any application.',

'Introducing txtai\ntxtai builds an AI-powered index over sections of text.',

'txtai supports building text indices to perform \nsimilarity searches and create extractive question-answering based systems.',

'txtai also has functionality \nfor zero-shot classification.',

'txtai is open source and available on GitHub.',

'txtai and/or the concepts behind it has already been used to power the Natural Language Processing \n(NLP) applications listed below:\n\n• paperai — AI-powered literature discovery and review engine for medical/scientific papers\n• tldrstory — AI-powered understanding of headlines and story text\n• neuspo — Fact-driven, real-time sports event and news site\n• codequestion — Ask coding questions directly from the terminal\n\nBuild an Embeddings index\nFor small lists of texts, the method above works.',

'But for larger repositories of documents, it doesn’t \nmake sense to tokenize and convert all embeddings for each query.',

'txtai supports building pre-\ncomputed indices which significantly improves performance.',

'Building on the previous example, the following example runs an index method to build and store the \ntext embeddings.',

'In this case, only the query is converted to an embeddings vector each search.',

'https://github.com/neuml/codequestion\nhttps://neuspo.com/\nhttps://github.com/neuml/tldrstory\nhttps://github.com/neuml/paperai\n - Introducing txtai, an AI-powered search engine built on Transformers\n - Add Natural Language Understanding to any application\n - Introducing txtai\n - Build an Embeddings index']

Now the document is split up at the sentence level. These sentences can be feed to a workflow that adds each sentence to an embeddings index. Depending on the task, this may work well. Alternatively, it may be even better to split at the paragraph level.

Extract paragraphs

Paragraph detection looks for consecutive newlines. This call returns a list of paragraphs.

textractor = Textractor(paragraphs=True)

for paragraph in textractor("txtai/article.pdf"):

print(paragraph, "\n----")

Introducing txtai, an AI-powered search engine

built on Transformers

----

Add Natural Language Understanding to any application

----

Search is the base of many applications. Once data starts to pile up, users want to be able to find it. It’s

the foundation of the internet and an ever-growing challenge that is never solved or done.

----

The field of Natural Language Processing (NLP) is rapidly evolving with a number of new

developments. Large-scale general language models are an exciting new capability allowing us to add

amazing functionality quickly with limited compute and people. Innovation continues with new models

and advancements coming in at what seems a weekly basis.

----

This article introduces txtai, an AI-powered search engine that enables Natural Language

Understanding (NLU) based search in any application.

----

Introducing txtai

txtai builds an AI-powered index over sections of text. txtai supports building text indices to perform

similarity searches and create extractive question-answering based systems. txtai also has functionality

for zero-shot classification. txtai is open source and available on GitHub.

----

txtai and/or the concepts behind it has already been used to power the Natural Language Processing

(NLP) applications listed below:

----

• paperai — AI-powered literature discovery and review engine for medical/scientific papers

• tldrstory — AI-powered understanding of headlines and story text

• neuspo — Fact-driven, real-time sports event and news site

• codequestion — Ask coding questions directly from the terminal

----

Build an Embeddings index

For small lists of texts, the method above works. But for larger repositories of documents, it doesn’t

make sense to tokenize and convert all embeddings for each query. txtai supports building pre-

computed indices which significantly improves performance.

----

Building on the previous example, the following example runs an index method to build and store the

text embeddings. In this case, only the query is converted to an embeddings vector each search.

----

https://github.com/neuml/codequestion

https://neuspo.com/

https://github.com/neuml/tldrstory

https://github.com/neuml/paperai

- Introducing txtai, an AI-powered search engine built on Transformers

- Add Natural Language Understanding to any application

- Introducing txtai

- Build an Embeddings index

----

Extract sections

Section extraction is format dependent. If page breaks are available, each section is a page. Otherwise, this call returns logical sections such by headings.

textractor = Textractor(sections=True)

print("\n[PAGE BREAK]\n".join(section for section in textractor("txtai/article.pdf")))

Introducing txtai, an AI-powered search engine

built on Transformers

Add Natural Language Understanding to any application

Search is the base of many applications. Once data starts to pile up, users want to be able to find it. It’s

the foundation of the internet and an ever-growing challenge that is never solved or done.

The field of Natural Language Processing (NLP) is rapidly evolving with a number of new

developments. Large-scale general language models are an exciting new capability allowing us to add

amazing functionality quickly with limited compute and people. Innovation continues with new models

and advancements coming in at what seems a weekly basis.

This article introduces txtai, an AI-powered search engine that enables Natural Language

Understanding (NLU) based search in any application.

Introducing txtai

txtai builds an AI-powered index over sections of text. txtai supports building text indices to perform

similarity searches and create extractive question-answering based systems. txtai also has functionality

for zero-shot classification. txtai is open source and available on GitHub.

txtai and/or the concepts behind it has already been used to power the Natural Language Processing

(NLP) applications listed below:

• paperai — AI-powered literature discovery and review engine for medical/scientific papers

• tldrstory — AI-powered understanding of headlines and story text

• neuspo — Fact-driven, real-time sports event and news site

• codequestion — Ask coding questions directly from the terminal

Build an Embeddings index

For small lists of texts, the method above works. But for larger repositories of documents, it doesn’t

make sense to tokenize and convert all embeddings for each query. txtai supports building pre-

computed indices which significantly improves performance.

Building on the previous example, the following example runs an index method to build and store the

text embeddings. In this case, only the query is converted to an embeddings vector each search.

https://github.com/neuml/codequestion

https://neuspo.com/

https://github.com/neuml/tldrstory

https://github.com/neuml/paperai

[PAGE BREAK]

- Introducing txtai, an AI-powered search engine built on Transformers

- Add Natural Language Understanding to any application

- Introducing txtai

- Build an Embeddings index