Embeddings in the Cloud

Load and use an embeddings index from the Hugging Face Hub

Embeddings is the engine that delivers semantic search. Data is transformed into embeddings vectors where similar concepts will produce similar vectors. Indexes both large and small are built with these vectors. The indexes are used to find results that have the same meaning, not necessarily the same keywords.

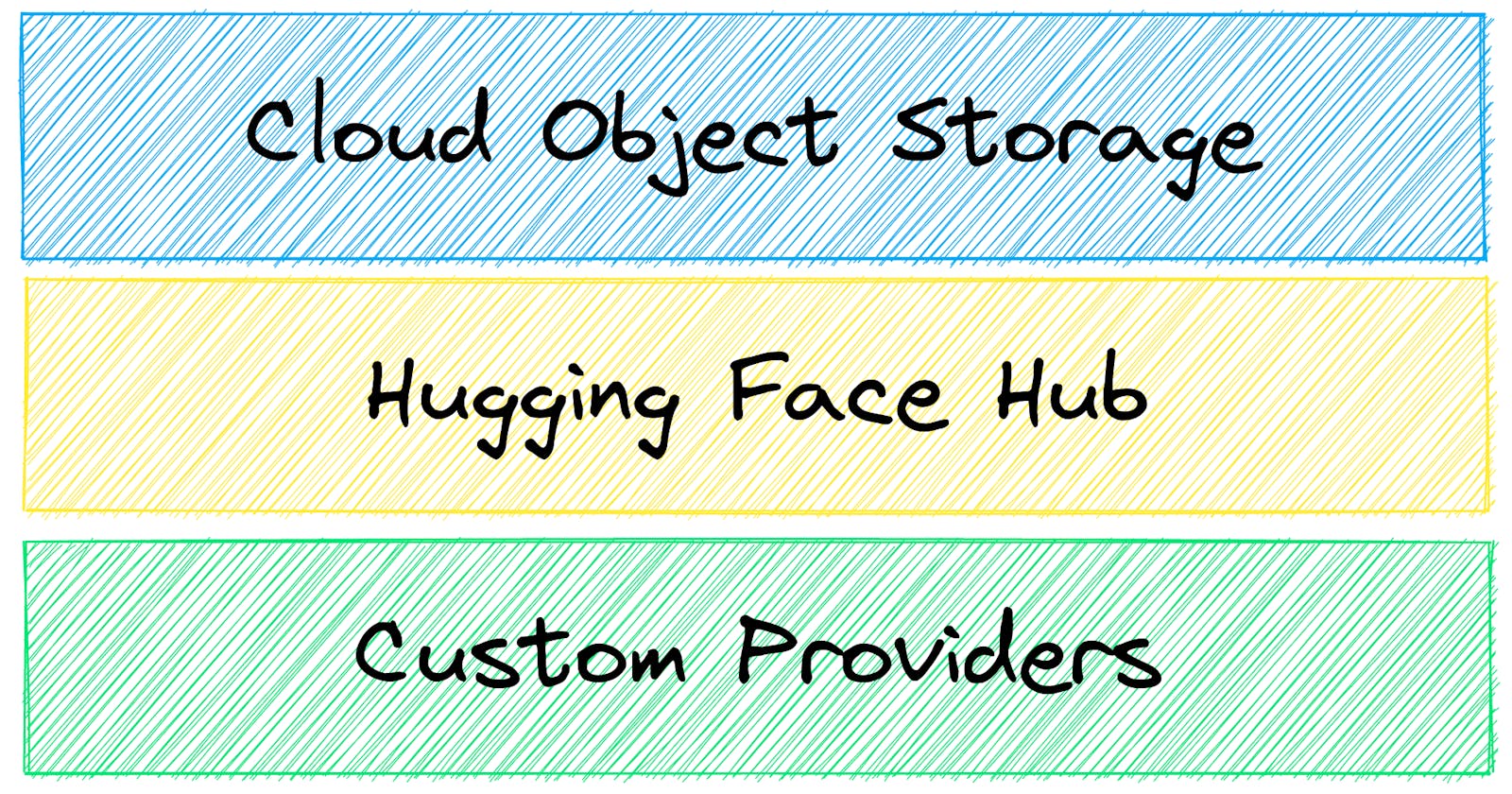

In addition to local storage, embeddings can be synced with cloud storage. Given that txtai is a fully encapsulated index format, cloud sync is simply a matter of moving a group of files to and from cloud storage. This can be object storage such as AWS S3/Azure Blob/Google Cloud or the Hugging Face Hub. More details on available options can be found in the documentation. There is also an article available that covers how to build and store indexes in cloud object storage.

This article will cover an example of loading embeddings indexes from the Hugging Face Hub.

Install dependencies

Install txtai and all dependencies.

# Install txtai

pip install txtai

Integration with the Hugging Face Hub

The Hugging Face Hub has a vast array of models, datasets and example applications available to jumpstart your project. This now includes txtai indexes 🔥🔥🔥

Let's load the embeddings used in the standard Introducing txtai example.

from txtai.embeddings import Embeddings

# Load the index from the Hub

embeddings = Embeddings()

embeddings.load(provider="huggingface-hub", container="neuml/txtai-intro")

Notice the two fields, provider and container. The provider field tells txtai to look for an index in the Hugging Face Hub. The container field sets the target repository.

print("%-20s %s" % ("Query", "Best Match"))

print("-" * 50)

# Run an embeddings search for each query

for query in ("feel good story", "climate change", "public health story", "war", "wildlife", "asia", "lucky", "dishonest junk"):

# Get to the top result

result = embeddings.search(query, 1)[0]

# Print text

print("%-20s %s" % (query, result["text"]))

Query Best Match

--------------------------------------------------

feel good story Maine man wins $1M from $25 lottery ticket

climate change Canada's last fully intact ice shelf has suddenly collapsed, forming a Manhattan-sized iceberg

public health story US tops 5 million confirmed virus cases

war Beijing mobilises invasion craft along coast as Taiwan tensions escalate

wildlife The National Park Service warns against sacrificing slower friends in a bear attack

asia Beijing mobilises invasion craft along coast as Taiwan tensions escalate

lucky Maine man wins $1M from $25 lottery ticket

dishonest junk Make huge profits without work, earn up to $100,000 a day

If you've seen txtai before, this is the classic example. The big difference though is the index was loaded from the Hugging Face Hub instead of being built dynamically.

Wikipedia search with txtai

Let's try something more interesting using the Wikipedia index available on the Hugging Face Hub

from txtai.embeddings import Embeddings

# Load the index from the Hub

embeddings = Embeddings()

embeddings.load(provider="huggingface-hub", container="neuml/txtai-wikipedia")

Now run a series of searches to show the kind of data available in this index.

import json

for x in embeddings.search("Roman Empire", 1):

print(json.dumps(x, indent=2))

{

"id": "Roman Empire",

"text": "The Roman Empire ( ; ) was the post-Republican period of

ancient Rome. As a polity, it included large territorial holdings

around the Mediterranean Sea in Europe, North Africa, and Western

Asia, and was ruled by emperors. From the accession of Caesar Augustus

as the first Roman emperor to the military anarchy of the 3rd century,

it was a Principate with Italia as the metropole of its provinces and

the city of Rome as its sole capital. The Empire was later ruled by

multiple emperors who shared control over the Western Roman Empire and

the Eastern Roman Empire. The city of Rome remained the nominal

capital of both parts until AD 476 when the imperial insignia were

sent to Constantinople following the capture of the Western capital of

Ravenna by the Germanic barbarians. The adoption of Christianity as

the state church of the Roman Empire in AD 380 and the fall of the

Western Roman Empire to Germanic kings conventionally marks the end of

classical antiquity and the beginning of the Middle Ages. Because of

these events, along with the gradual Hellenization of the Eastern

Roman Empire, historians distinguish the medieval Roman Empire that

remained in the Eastern provinces as the Byzantine Empire.",

"score": 0.8913329243659973

}

for x in embeddings.search("How does a car engine work", 1):

print(json.dumps(x, indent=2))

{

"id": "Internal combustion engine",

"text": "An internal combustion engine (ICE or IC engine) is a heat

engine in which the combustion of a fuel occurs with an oxidizer

(usually air) in a combustion chamber that is an integral part of the

working fluid flow circuit. In an internal combustion engine, the

expansion of the high-temperature and high-pressure gases produced by

combustion applies direct force to some component of the engine. The

force is typically applied to pistons (piston engine), turbine blades

(gas turbine), a rotor (Wankel engine), or a nozzle (jet engine). This

force moves the component over a distance, transforming chemical

energy into kinetic energy which is used to propel, move or power

whatever the engine is attached to. This replaced the external

combustion engine for applications where the weight or size of an

engine was more important.",

"score": 0.8664469122886658

}

for x in embeddings.search("Who won the World Series in 2022?", 1):

print(json.dumps(x, indent=2))

{

"id": "2022 World Series",

"text": "The 2022 World Series was the championship series of Major

League Baseball's (MLB) 2022 season. The 118th edition of the World

Series, it was a best-of-seven playoff between the American League

(AL) champion Houston Astros and the National League (NL) champion

Philadelphia Phillies. The Astros defeated the Phillies in six games

to earn their second championship. The series was broadcast in the

United States on Fox television and ESPN Radio. ",

"score": 0.8889098167419434

}

for x in embeddings.search("What was New York called under the Dutch?", 1):

print(json.dumps(x, indent=2))

{

"id": "Dutch Americans in New York City",

"text": "Dutch people have had a continuous presence in New York

City for nearly 400 years, being the earliest European settlers. New

York City traces its origins to a trading post founded on the southern

tip of Manhattan Island by Dutch colonists in 1624. The settlement was

named New Amsterdam in 1626 and was chartered as a city in 1653.

Because of the history of Dutch colonization, Dutch culture, politics,

law, architecture, and language played a formative role in shaping the

culture of the city. The Dutch were the majority in New York City

until the early 1700s and the Dutch language was commonly spoken until

the mid to late-1700s. Many places and institutions in New York City

still bear a colonial Dutch toponymy, including Brooklyn (Breukelen),

Harlem (Haarlem), Wall Street (Waal Straat), The Bowery (bouwerij

(\u201cfarm\u201d), and Coney Island (conyne).",

"score": 0.8840358853340149

}

It's now probably clear how these results can be combined with another component (such as a LLM prompt) to build a conversational QA-based system!

Filter by popularity

Let's try one last query. This is a generic query where there are a lot of matching results with similarity search alone.

for x in embeddings.search("Boston", 1):

print(json.dumps(x, indent=2))

{

"id": "Boston (song)",

"text": "\"Boston\" is a song by American rock band Augustana, from

their debut album All the Stars and Boulevards (2005). It was

originally produced in 2003 by Jon King for their demo, Midwest Skies

and Sleepless Mondays, and was later re-recorded with producer Brendan

O'Brien for All the Stars and Boulevards.",

"score": 0.8729256987571716

}

While the result is about Boston, it's not the most popular result. This is where the percentile field comes in to help. The results can be filtered based on the number of page views.

for x in embeddings.search("SELECT id, text, score, percentile FROM txtai WHERE similar('Boston') AND percentile >= 0.99", 1):

print(json.dumps(x, indent=2))

{

"id": "Boston",

"text": "Boston, officially the City of Boston, is the state capital

and most populous city of the Commonwealth of Massachusetts, as well

as the cultural and financial center of the New England region of the

United States. It is the 24th-most populous city in the country. The

city boundaries encompass an area of about and a population of

675,647 as of 2020. It is the seat of Suffolk County (although the

county government was disbanded on July 1, 1999). The city is the

economic and cultural anchor of a substantially larger metropolitan

area known as Greater Boston, a metropolitan statistical area (MSA)

home to a census-estimated 4.8\u00a0million people in 2016 and ranking

as the tenth-largest MSA in the country. A broader combined

statistical area (CSA), generally corresponding to the commuting area

and including Providence, Rhode Island, is home to approximately

8.2\u00a0million people, making it the sixth most populous in the

United States.",

"score": 0.8668985366821289,

"percentile": 0.9999025135905505

}

This query adds an additional filter to only match results for the Top 1% of visited Wikipedia pages.

Wrapping up

This article covered how to load embeddings indexes from cloud storage. The Hugging Face Hub is a great resource for sharing models, datasets, example applications and now txtai embeddings indexes. This is especially useful when indexing time is long or requires significant GPU resources.

Looking forward to seeing what embeddings indexes the community shares in the coming months!