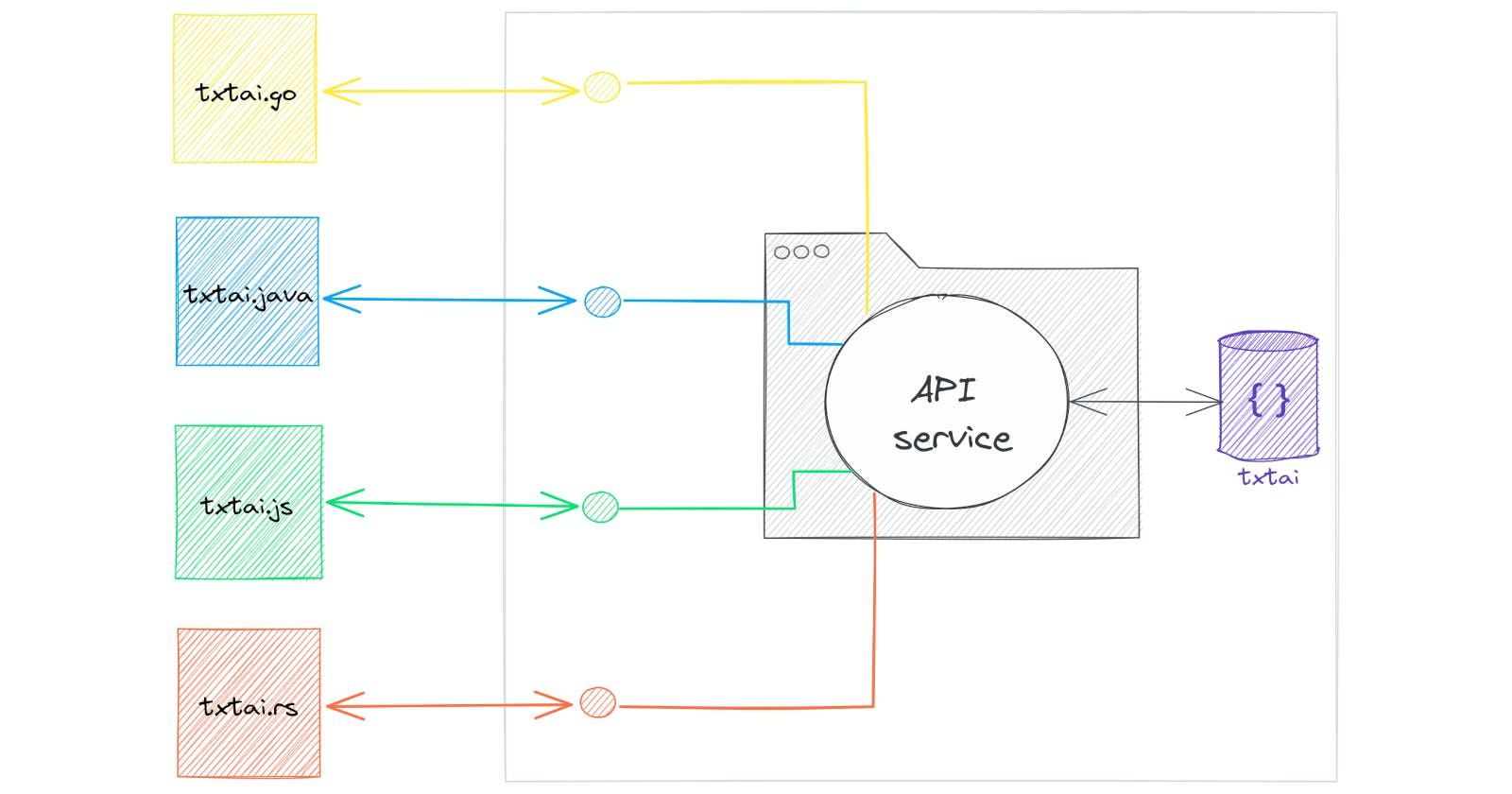

The txtai API is a web-based service backed by FastAPI. Semantic search, LLM orchestration and Language Model Workflows can all run through the API.

While the API is extremely flexible and complex logic can be executed through YAML-driven workflows, some may prefer to create an endpoint in Python.

This article introduces API extensions and shows how they can be used to define custom Python endpoints that interact with txtai applications.

Install dependencies

Install txtai and all dependencies.

# Install txtai

pip install txtai[api] datasets

Define the extension

First, we'll create an application that defines a persistent embeddings database and LLM. Then we'll combine those two into a RAG endpoint through the API.

The code below creates an API endpoint at /rag. This is a GET endpoint that takes a text parameter as input.

app.yml

# Embeddings index

writable: true

embeddings:

hybrid: true

content: true

# LLM pipeline

llm:

path: google/flan-t5-large

torch_dtype: torch.bfloat16

rag.py

from fastapi import APIRouter

from txtai.api import application, Extension

class RAG(Extension):

"""

API extension

"""

def __call__(self, app):

app.include_router(RAGRouter().router)

class RAGRouter:

"""

API router

"""

router = APIRouter()

@staticmethod

@router.get("/rag")

def rag(text: str):

"""

Runs a retrieval augmented generation (RAG) pipeline.

Args:

text: input text

Returns:

response

"""

# Run embeddings search

results = application.get().search(text, 3)

context = " ".join([x["text"] for x in results])

prompt = f"""

Answer the following question using only the context below.

Question: {text}

Context: {context}

"""

return {

"response": application.get().pipeline("llm", (prompt,))

}

Start the API instance

Let's start the API with the RAG extension.

CONFIG=app.yml EXTENSIONS=rag.RAG nohup uvicorn "txtai.api:app" &> api.log &

sleep 60

Create the embeddings database

Next, we'll create the embeddings database using the ag_news dataset. This is a set of news stories from the mid 2000s.

from datasets import load_dataset

import requests

ds = load_dataset("ag_news", split="train")

# API endpoint

url = "http://localhost:8000"

headers = {"Content-Type": "application/json"}

# Add data

batch = []

for text in ds["text"]:

batch.append({"text": text})

if len(batch) == 4096:

requests.post(f"{url}/add", headers=headers, json=batch, timeout=120)

batch = []

if batch:

requests.post(f"{url}/add", headers=headers, json=batch, timeout=120)

# Build index

index = requests.get(f"{url}/index")

Run queries

Now that we have a knowledge source indexed, let's run a set of queries. The code below defines a method that calls the /rag endpoint and retrieves the response. Keep in mind this dataset is from 2004.

While the Python Requests library is used in this article, this is a simple web endpoint that can be called from any programming language.

def rag(text):

return requests.get(f"{url}/rag?text={text}").json()["response"]

rag("Who is the current President?")

'George W. Bush'

rag("Who lost the presidential election?")

'John Kerry'

rag("Who won the World Series?")

'Boston'

rag("Who did the Red Sox beat to win the world series?")

'Cardinals'

rag("What major hurricane hit the USA?")

'Charley'

rag("What mobile phone manufacturer has the largest current marketshare?")

'Nokia'

Wrapping up

This article showed how a txtai application can be extended with custom endpoints in Python. While applications have a robust workflow framework, it may be preferable to write complex logic in Python and this method enables that.